Last week, Chancellor Goldstein posted a very interesting piece related to the Central Limit Theorem (CLT). I think experienced statistics instructors will concur that few students truly grasp the essence of the Theorem. I myself did not fully appreciate the CLT when I was an undergraduate student. For the untrained mind, the term “random variable” tends to elicit a negative emotional reaction, because colloquially “random” is synonymous to “chaotic” or “arbitrary,” which is undesirable. The beauty of the Central Limit Theorem is that it tells how random numbers should behave. Random numbers are not just some crazy numbers, but they must follow equations described in the Chancellor’s blog. When introducing random variables, I enjoy telling this story about the Nobel laureate Murray Gell-Mann. In the 1950’s, while being a Caltech professor, Gell-Mann also served as a consultant at the RAND Corporation, a military think tank. One day, he received the book “RAND Table of Random Numbers,” and to his amusement he found an errata sheet in it. The RAND mathematicians needed to supply “corrections” to some of the random numbers!

The Central Limit Theorem is so named because of its central importance to probability theory; inferential statistics work because we know, on the basis of the CLT, how to quantify random variations (again they are not arbitrary). Now that it’s the election season, we are bombarded with poll numbers and their margins of error. Polling is one of the most encountered applications of inferential statistics in our daily life, yet I suspect that many people have difficulty connecting their textbook statistical knowledge to news reports. The problem is that introductory textbooks try to be as general as possible, and statistical concepts are expressed in terms of ,

, and all those Greek letters (literally). For students less comfortable with algebra, the central idea got obscured in the profusion of mathematical symbols. Because the term margin of error is mentioned so frequently in news media, let me give a quick-and-dirty way to estimate it: it is the reciprocal of the square root of the number of people polled (see the technical note below for justification). For example, if we read that a New York Times/CBS poll found that the President’s approval rate was 45%, and this poll was based on interviewing 1,009 likely voters, then the margin of error is

or about 3%. In the same poll, if we asked a subgroup of 301 Republicans about their opinion, the margin of error would be

or 6%. You should use your calculator to verify some of the published polls. Although I know the math, I still find it amazing that the margin of error does not depend on the size of the population, and surveys of the entire US (population 300 million) typically involve samples of only about 1,000 respondents. You will still need a sample of about 1,000 to conduct a poll in Iceland (population 300 thousand), or India (population 1.2 billion), to achieve a margin of error of 3%.

We all know that bias in sampling can produce unreliable poll results. However, I want to highlight the fact that even if we have a fair and ideal condition, we might still miss the target. The above-mentioned method for margin of error ( where

is the sample size) applies to a 95% confidence level—the level adopted by essentially all polling organizations. This means that we should expect that 5 out of 100 samples, or 1 out of 20 samples, with confidence intervals not containing the true value. With so many polls generated on any given day, it is highly likely that some numbers are simply random fluctuation.

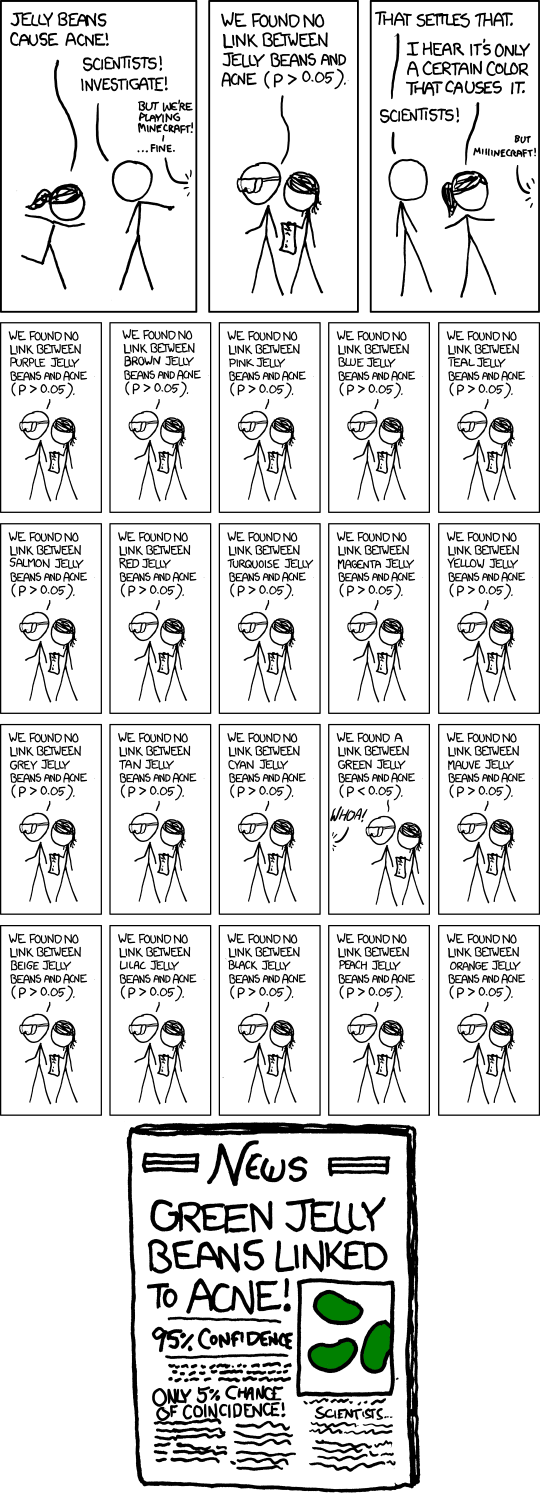

Now I want to switch from estimation to hypothesis testing, which can be viewed as the art of distinguishing pattern from randomness. (Recall, the CLT predicts the behavior of random numbers.) We need to recognize that a test of statistical significance does not prove anything conclusively. It only specifies a p-value, which is quantitative statement of the likelihood that the observed result occurred strictly by randomness alone. Conventionally, a p-value of 0.05, or 1 in 20, is considered significant, but in modern times, there are many problems with this simple-minded criterion. The widespread availability of computer software has rendered virtually effortless some statistical procedures that not long ago required days or even months of work. Of every 100 tests, you expect 5, on average, to be “significant at the 95% level” purely because of random variation. Therefore, if one tests many hypotheses, some of the hypotheses (1/20 on average) will come up significant purely due to the nature of randomness. The comic below from xkcd brilliantly explains the basic problem: in this imagined example, there is no overall effect of jelly beans on acne. But if you try repeatedly with different colors (purple, brown, pink, blue, etc), some colors will eventually reach the significance threshold .

This comic illustrates that false positive becomes a near certainty in multiple testing. Source: http://xkcd.com/882/

Nowadays, media are quite eager to sensationalize some seemingly shocking claims. Undoubtedly you have encountered claims like “coffee causes pancreatic cancer,” “Type A personality causes heart attacks,” “trans-fat is a killer,” and more. In an article published in the September 2011 issue of Significance magazine, S. Stanley Young and Alan Karr bluntly state that “any claim coming from an observational study is most likely to be wrong.” They examined the claim “females eating cereals leads to more boy babies” published in the Proceedings of the Royal Society. The researchers of that study used a food questionnaire not of cereal alone but of 132 different food items. Young and his collaborators computed t-tests using the two periods of data supplied by the researchers, and found that the p-values are essentially uniformly distributed between 0 and 1. To see how small p-values are simply the result of chance, you should try this web animation “there must be something buried in here somewhere.”

When pioneering statisticians developed the standard techniques, they were dealing with far less data using much inferior technology. I hope readers of this blog become aware of the rampant “scattershot” approach to statistically significant but false discoveries merely due to the property of “random” numbers.

Technical Note. Consulting a statistics textbook, and you will find the margin of error at a

level of confidence of an estimated population proportion

expressed as

where is the sample size and

can be located in a table. I think this formula intimidates a lot of students, and most of them will never use it after the final exam. When talking about the margin of error in polls, because essentially everyone uses a 95% confidence level,

. In today’s politics, the country is roughly evenly divided, and 0.5 is a reasonable value for

. With these assumption, the margin of error becomes much less intimidating:

. Personally, I think no student should exit a statistics (or any quantitative) course without knowing this approximation.

Ini merupakan ketidakcocokan di antara text ke teman simpatik serta naskah MS Word. Pada akhirnya, apa yang lebih membahagiakan buat dibaca? Tambah pelbagai kalimat dan pembaca Anda akan melepasnya. Asumsikan menggunting kalimat dari suatu kalimat, lalu memasukkan ke kantong plastik buat bikin beberapa kalimat pendek.

Pukulan tas! Tempatkan kalimat Anda serta melakukan eksperimen sekarang ini. Apa kemungkinan celotehpraja ” celotehpraja “untuk membaginya ujaran paling akhir Anda jadi dua kalimat? Bisakah Anda tempatkan ujaran dari awalnya hingga akhir dan kebalikannya

Jadi apa barangkali buat membumbui buah pikiran baru buat perpanjang kalimat kecil ini? Berikut ini trik bikin sebuah artikel situs yang baik, serta atas itu Anda bakal menciptakan text yang semakin lebih maju dan menarik memanfaatkan konstruksi berlainan untuk kalimat Anda. Tiap-tiap bidang punya bahasanya sendiri.

Even if you fall into a trap. Even if you fall into the trap of crisis. I want to accomplish what I envisioned. wherever we go 파워볼 중계 화면

Nice info, thanks for share

Great! Visit my blog

Useful and educative article. Thanks for sharing

Thank you for the great information. my article Sains301

يکي ديگر از پرکاربردترين مصالحي که در ساختمانسازي از آن استفاده ميشود سراميک است که خود انواع متعددي دارد و در بهترین مارک سرامیک کف قسمتهاي مختلف يک ساختمان ازجمله آشپزخانه، حمام و سرويس بهداشتي، حياط، پارکينگ و حتي نماي ساختمان، گلخانه و حوضهاي تزئيني کاربرد دارند.

best-brand-floor-ceramics

Thank you for the great information.

https://pencarian.id

It was so useful

آموزش زبان در خواب انگلیشدان به شما بهترین راهکار ها برای یادگیری زبان انگلیسی در خواب را نشان میدهد. به طور کلی انگلیسی در خواب ویادگیری آن کاملا امکان پذیر است به شرط به کارگیری روش های اصولی یادگیری زبان در خواب

همچنین با انگلیشدان englishdon میتوانید هر چه سریع تر اصطلاحات بانکی به انگلیسی را بیاموزید

همچنین لیست کاملی از لیست کلمات انگلیسی مربوط به بانک نیز ازائه شده که میتوانید بخوانید

در پایان نیز نمونه هایی از نمونه هایی از مکالمات روزمره انگلیسی در بانک ارائه داده شده است

مطالعهی اصطلاحات بانکی به انگلیسی انگلیشدان را از دست ندهید

تدریس خصوصی زبان انگلیسی در تهران در انگلیشدان انتخابی سریع در تقویت زبان انگلیسی است. در سیستم تدریس خصوصی زبان انگلیسی در منزل انگلیشدان که اغلب به صورت آموزش آنلاین زبان انگلیسی برگزار شده که با تدریس یک استاد خصوصی زبان انگلیسی برتر و در یکی از بهترین کلاس های خصوصی زبان انگلیسی با بهترین شرایط و با نظارت بهترین استاد زبان انگلیسی استاد محسن کشاورزی مدیریت وبسایت آموزش زبان انگلیسی انگلیشدان برگزار میشود برای ایلتس آماده شوید، تقویت مکالمه زبان انگلیسی و تقویت لیسنینگ زبان انگلیسی را در سطوح بالا تجربه کنید کلاس های آنلاین زبان انگلیسی انگلیشدان انتخابی بسیار مناسب برای یک کلاس آموزش زبان آنلاین بوده و شرایط بسیار خوبی را در قالب یک کلاس زبان آنلاین برای شما فراهم میآورد

مدیریت انگلیشدان استاد محسن کشاورزی در مسیر یادگیری زبان انگلیسی در آموزش خصوصی زبان انگلیشدان برای شما ارزوی موفقیت میکند

Great article

nice information

Useful and educative article. Thanks for sharing

nice articles !!!

https://www.mohdpurwadi.my.id

https://www.mastimon.com/

Hey there this is somewhat of off topic but I

was wondering if blogs use WYSIWYG editors or if you have to manually code with HTML.

I’m starting a blog soon but have no coding know-how so I wanted to get guidance from someone with experience. Any help would be greatly appreciated!

Visit my page: travellers auto insurance